What Influences Brand Mentions in ChatGPT, Gemini & Claude?

Want to rank in ChatGPT, Gemini, and Claude? Discover the core LLM ranking logic, from entity clarity to trusted citations, that drives AI brand mentions.

We've spent the last year obsessing over a single question: why does ChatGPT mention some brands and completely ignore others? And the same goes for Gemini and Claude.

It's not academic curiosity. We built Genezio specifically to track this—running thousands of prompts across AI engines every week, logging which brands get named, which get skipped, and trying to reverse-engineer the patterns behind it all.

Here's what we've actually found.

It Starts Before the Answer Gets Written

Most people think about AI brand mentions like they think about Google rankings—optimize your page, move up the results. But that mental model is wrong.

LLMs don't scan a ranked list of pages. They retrieve information first, then synthesize a response. That retrieval step is where everything gets decided, and it works differently across engines.

ChatGPT, for instance, triggers real-time web searches for many queries (especially through the web interface). Gemini pulls from Google's index in ways that mirror but don't replicate traditional search. Claude tends to lean more heavily on its training data, with search augmentation behavior that varies depending on the interface and query type.

The point is: if your brand isn't present in whatever retrieval pathway a particular engine activates for a particular query, you won't appear in the answer. Full stop. It doesn't matter how good your content is.

We've seen brands with stellar SEO rankings that get zero mentions in AI responses, simply because they don't show up in the right retrieval pathway. That disconnect between "ranking well on Google" and "being mentioned by AI" is real, and it surprises a lot of marketing teams.

The Content That Actually Gets Picked Up

LLMs love structured content. But not in the way you might think.

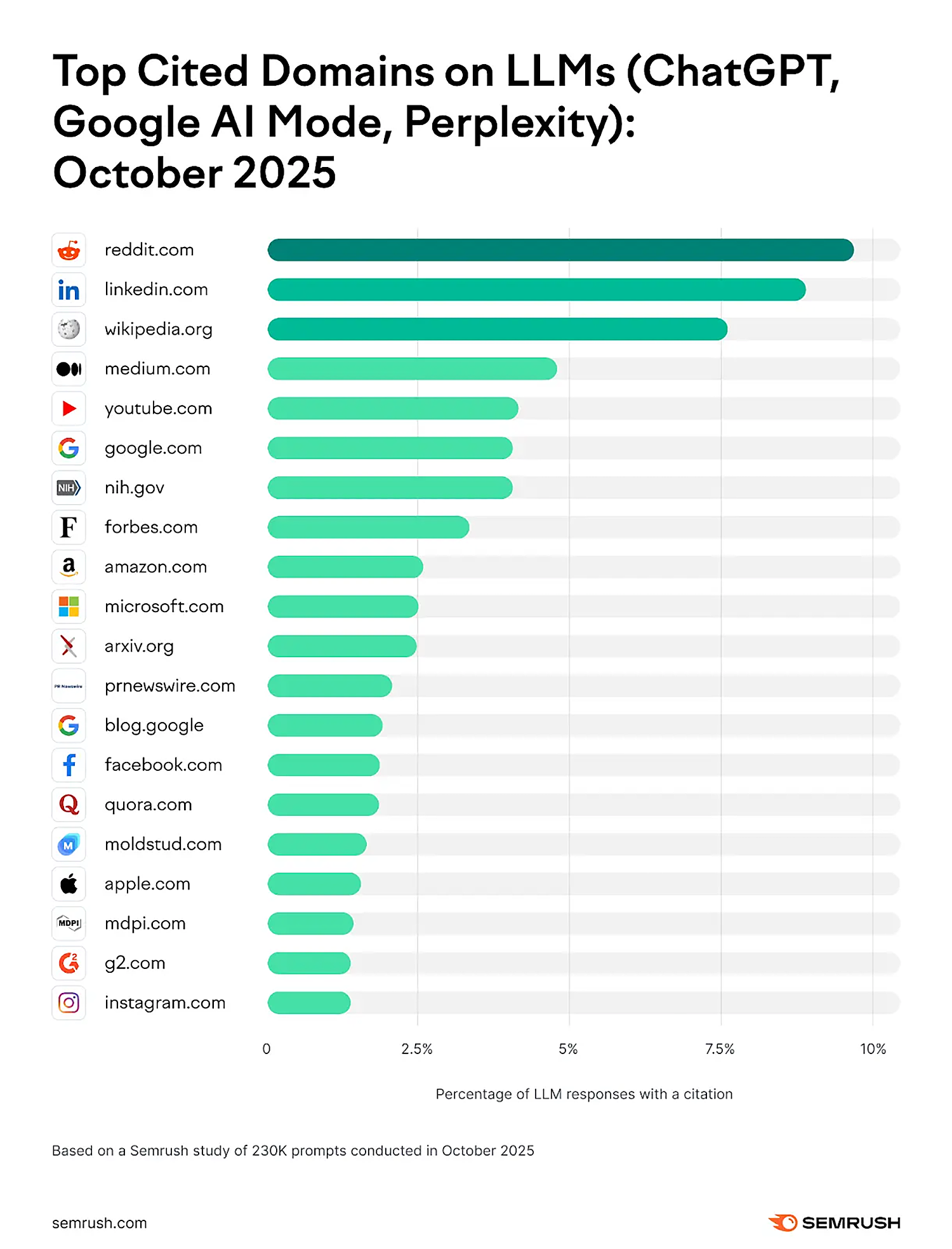

We analyzed citation patterns across ChatGPT, Gemini, and Claude over several months, and here's what kept showing up as source material:

Comparison and "best of" articles were by far the most commonly cited content type. When someone asks "what's the best project management tool for remote teams?", AI engines overwhelmingly pull from articles that already compare multiple options side by side. If your brand is mentioned in three different comparison articles on reputable sites, your odds of appearing in an AI response go way up.

Category explainers were the second most common. Think "What is customer data platform?" or "How does AI brand monitoring work?" These definitional pieces help LLMs understand what category a brand belongs to—which turns out to be critical for whether you get mentioned.

What doesn't work as well? Purely promotional landing pages. Press releases with no editorial substance. Blog posts that are essentially keyword-stuffed fluff. AI engines seem remarkably good at distinguishing between content written to inform and content written to sell.

One pattern that really stood out: when multiple independent sources describe a company using the same terminology (say, "AI visibility platform" used consistently across G2, Capterra, and an industry blog), that repetition across different domains acts like a confidence signal. The LLM basically says, "Multiple trustworthy sources agree on what this company does—I can safely include it."

What Makes an LLM Actually Name Your Brand

After monitoring hundreds of brand-query combinations, we've identified the factors that most consistently predict whether a brand gets mentioned. They're interconnected, so thinking about them in isolation misses the point—but here's the breakdown.

Third-party coverage matters more than your own content. This was the biggest insight for us. You can write the best blog post in the world about your product, but if nobody else is writing about you, AI engines will hesitate to mention you. Independent reviews, industry analyses, editorial features—this is where credibility lives in the LLM world.

We tracked one B2B SaaS brand that had world-class on-site content but virtually no third-party coverage. Across 50 relevant prompts, they appeared in exactly zero AI responses. Then they got featured in three industry publications over two months. Their mention rate went from 0% to about 15% within weeks. That's not a coincidence.

Semantic consistency is quietly important. If your website calls you a "revenue intelligence platform," but G2 categorizes you as "sales analytics software," and Gartner puts you in "sales engagement"—that inconsistency confuses LLMs. They struggle to classify you, so they often just leave you out.

The brands that get mentioned most reliably have very consistent positioning language across their own properties and third-party mentions. Same category labels, same core descriptors, same positioning statement. It sounds boring, but it works.

Intent alignment is tricky and underappreciated. A query like "best email marketing tools for small businesses" triggers a completely different mention pattern than "how does email marketing automation work?" The first one activates a recommendation mode—the LLM is trying to list options. The second activates an explainer mode—the LLM is trying to teach.

Your brand might appear for one type of query and not the other. We've seen brands that show up 40% of the time for "best X" queries but 0% for "how does X work" queries, simply because their content and third-party coverage are oriented toward one intent type.

Google AI Overview Is Its Own Thing

With Google's AI Overview expanding to more queries, it's tempting to lump it in with ChatGPT and Claude. But it operates under different logic.

In our monitoring, Google AI Overview tends to pull from content that's already ranking well in traditional search, but with a twist. It heavily favors content that can be cleanly extracted into snippets—structured answers, clear headings, definitional paragraphs. It's less about "who ranks #1" and more about "whose content can I most easily synthesize into a concise answer."

Cross-domain consistency matters here too. If five different sites all describe your brand as doing the same thing, Google AI Overview is more likely to confidently include you. If descriptions are fragmented or contradictory, you'll often get left out even if you rank well organically.

Where the Citations Actually Come From

One of the most interesting things we've learned from tracking citation patterns: it's not just "big sites" that get cited. The pattern is more nuanced than that.

Yes, industry publications and major editorial platforms show up frequently. But we also see niche directories, well-maintained comparison sites, and even individual blog posts from domain experts getting cited regularly—as long as the content is structured, specific, and clearly written.

The common thread isn't raw domain authority in the traditional SEO sense. It's what we'd call contextual trustworthiness. A specialized cybersecurity blog with 5,000 monthly visitors can outperform a major news site in AI citations for cybersecurity queries, because the LLM recognizes the topical depth.

This has big implications for smaller brands. You don't need coverage in The New York Times. You need coverage in the places that AI engines consider authoritative for your specific category.

Going Deep in Your Vertical Actually Pays Off

Generic, surface-level content about broad topics rarely drives AI brand mentions. What does work is going deep into your vertical.

We've watched this play out across dozens of brands in our platform. Companies that publish detailed, technical content about their specific use cases—complete with terminology that's native to their industry—consistently outperform those producing general-purpose marketing content.

Think about it from the LLM's perspective. When someone asks about "the best payment processing solution for SaaS subscription billing," the AI engine needs to pull from content that specifically addresses SaaS subscription billing, not just generic payment processing. Brands that have published content at that level of specificity have a structural advantage.

The takeaway: depth beats breadth. Every time.

Why We Built Our Monitoring Around This

Understanding these dynamics is why we built Genezio the way we did. We run real prompts across ChatGPT, Gemini, Claude, and Perplexity, track which brands get mentioned, analyze which sources get cited, and flag changes over time.

The gap between "what we think AI engines see" and "what AI engines actually mention" is often enormous. Traditional SERP tracking gives you one view. AI visibility monitoring gives you a completely different one.

And honestly, that's the biggest shift we're seeing right now. Brands that are invisible in AI responses—even though they dominate traditional search—are starting to feel the impact. The customer journey increasingly runs through an AI interface before anyone clicks a link.

So What Do You Actually Do About It?

If you've made it this far, here's the honest summary of what we think works in 2026:

Get third-party coverage. Seriously. This is table stakes. If independent sources aren't writing about you, AI engines won't either. Guest posts, industry reports, directory listings, analyst coverage—all of it feeds the credibility signal that LLMs look for.

Lock down your positioning language. Pick your category, pick your descriptors, and use them everywhere. Make sure third-party sources adopt the same language. Consistency creates confidence in the AI's classification logic.

Publish comparison-ready content. Articles that naturally position your brand alongside competitors in a structured, informative way are gold. This is the content type that gets cited most often in AI responses.

Go vertical, not horizontal. Deep expertise in your niche beats broad coverage of tangential topics. Focus on the queries your ideal customers are actually asking AI engines.

Monitor what AI engines actually say about you. Don't guess—measure. The landscape changes weekly, and what worked last month might not work next month.

Visibility in AI is no longer about being ranked. It's about being selected—and that selection process works in ways that are fundamentally different from everything we learned about traditional SEO.